When Does Technology Stop Being a Tool?

#148: The Moment Our Creations Stop Asking for Permission

For most of human history, technology waited for instruction.

A hammer did nothing until lifted. A printing press required a printer. Even a computer required deliberate input.

Artificial intelligence does something different. It drafts before we think, recommends before we search, filters before we choose. It does not simply extend intention — it anticipates it.

At what point does a tool stop asking for permission?

We are accustomed to calling AI a tool. The word reassures us. Tools obey. Tools remain subordinate. Tools are safe because they are inert.

But what if artificial intelligence is no longer inert?

What if it is becoming something closer to infrastructure — a system that shapes choices before we consciously make them?

That is the threshold this moment forces us to examine.

The Illusion of Neutrality

For centuries, we have clung to a comforting doctrine: technology is neutral. Tools are inert. Only humans are responsible for outcomes.

This view is partially true. A knife can prepare food or cause harm. The moral agent is the person holding it.

But the myth of AI neutrality becomes harder to defend as technologies scale and embed themselves into systems.

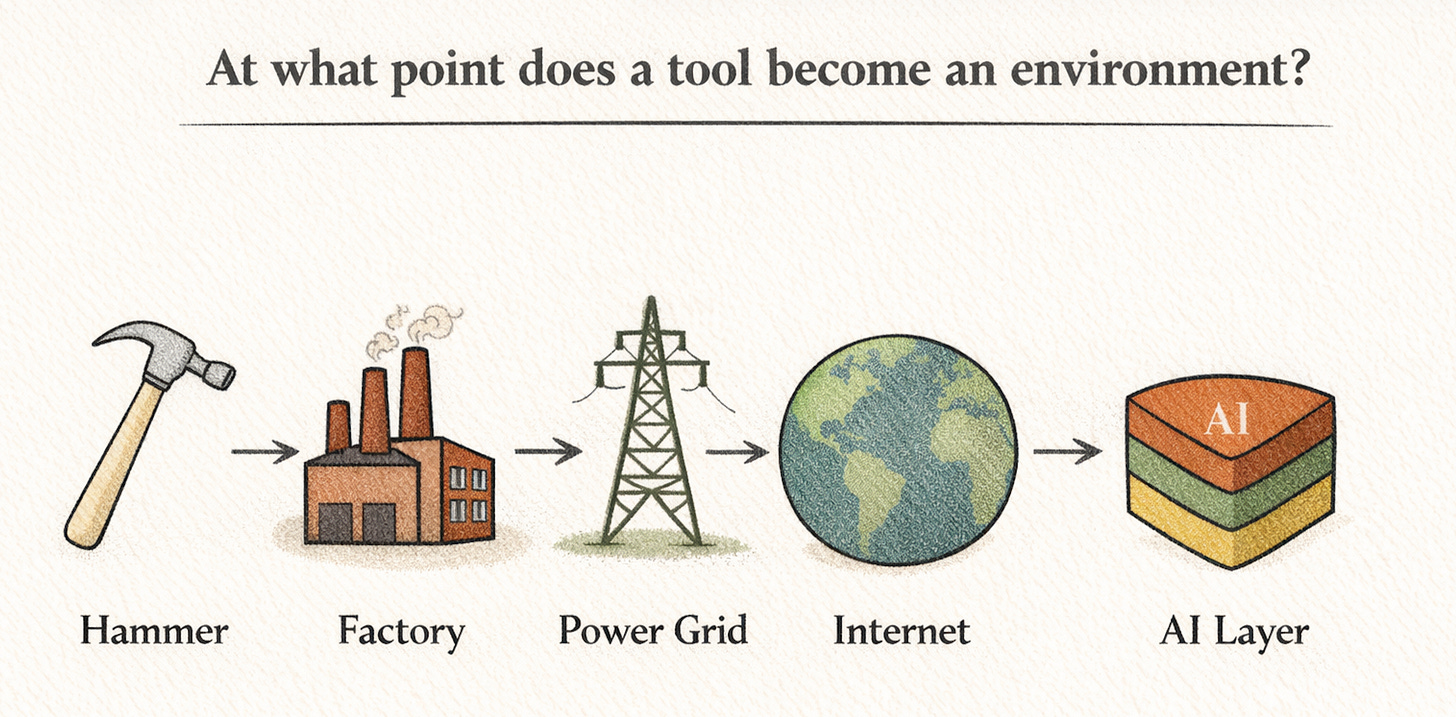

The printing press destabilized religious authority. Industrial machinery reorganized labor and urban life. Social media reshaped attention and political discourse.

Each began as a tool. Each evolved into an environment.

More recently, platforms like Facebook and Twitter (now X) did not simply connect friends. Their algorithms reordered visibility itself — amplifying outrage, filtering information, and quietly restructuring public conversation at planetary scale.

Were these still tools?

Technically, yes.

Functionally, no.

They became systems of influence.

And the distinction matters.

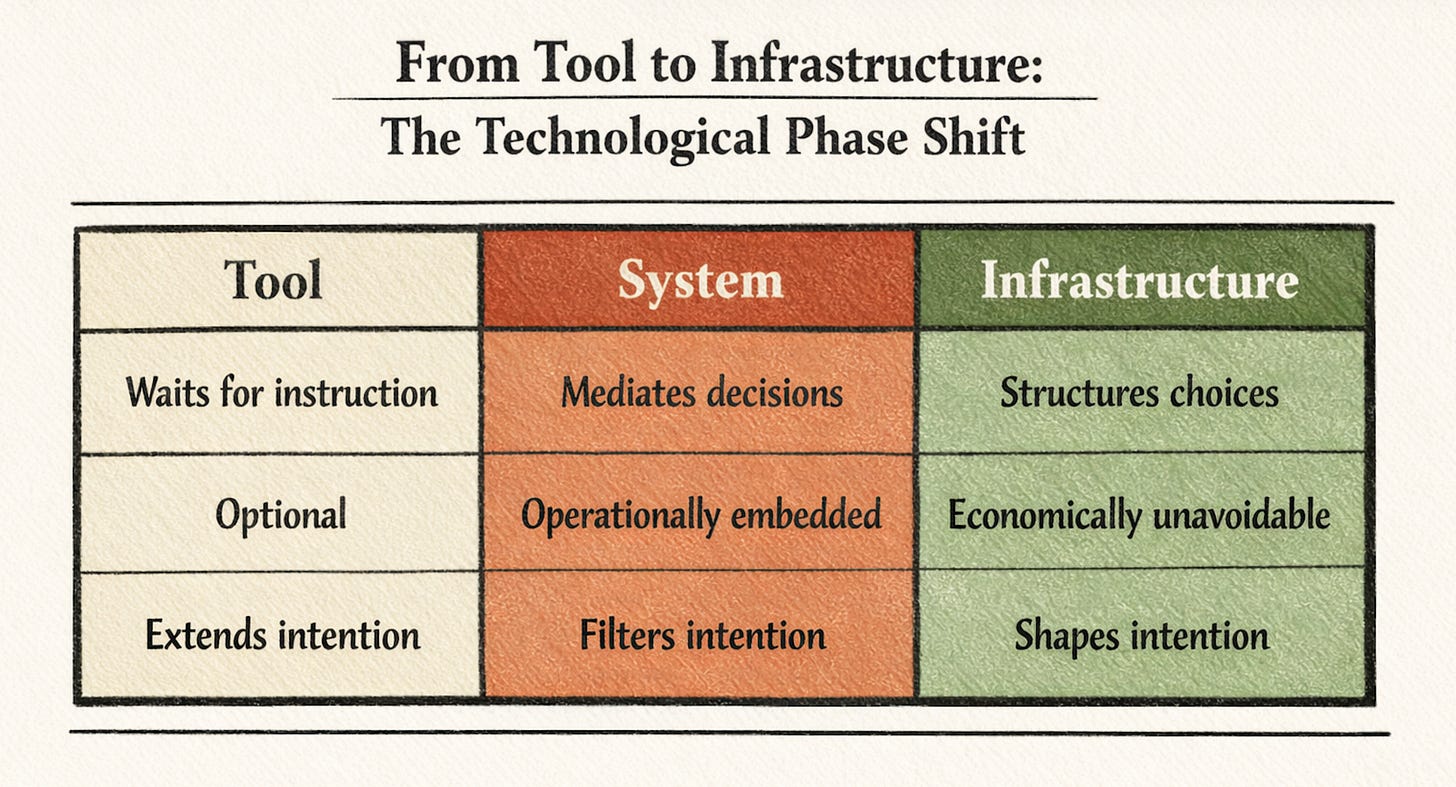

A tool waits to be used.

A system conditions how it will be used.

A tool extends intention.

A system shapes intention.

Artificial intelligence sits squarely in that tension.

AI: Tool, Agent, or Infrastructure?

When people talk about AI today, they often point to systems like ChatGPT, Midjourney, and GitHub Copilot.

On the surface, they are clearly tools. You prompt; they respond. You decide whether to accept the output. They assist; you remain in control.

But the story becomes more complex as AI systems:

Generate text that influences public opinion

Automate decision-making in hiring, lending, and sentencing

Personalize media streams at scale

Act autonomously in financial markets

Mediate education and healthcare workflows

AI is not just accelerating tasks. It is mediating judgment.

Artificial intelligence marks the emergence of what I call Cognitive Infrastructure (introduced in my book “Agentic Strategy”, available via Amazon here) — systems that do not merely execute tasks, but structure the environment in which human judgment occurs.

Like physical infrastructure organizes movement, and digital infrastructure organizes information, cognitive infrastructure organizes thought.

Once intelligence becomes infrastructural, it no longer waits for instruction. It shapes the terrain in which decisions unfold.

And once a system begins mediating judgment at scale, it stops being merely instrumental. It becomes infrastructural.

Infrastructure is not something you pick up and put down. It becomes the background condition of life.

Electricity is not a tool; it is an environment.

The internet is not a tool; it is an ecosystem.

Increasingly, AI is becoming both.

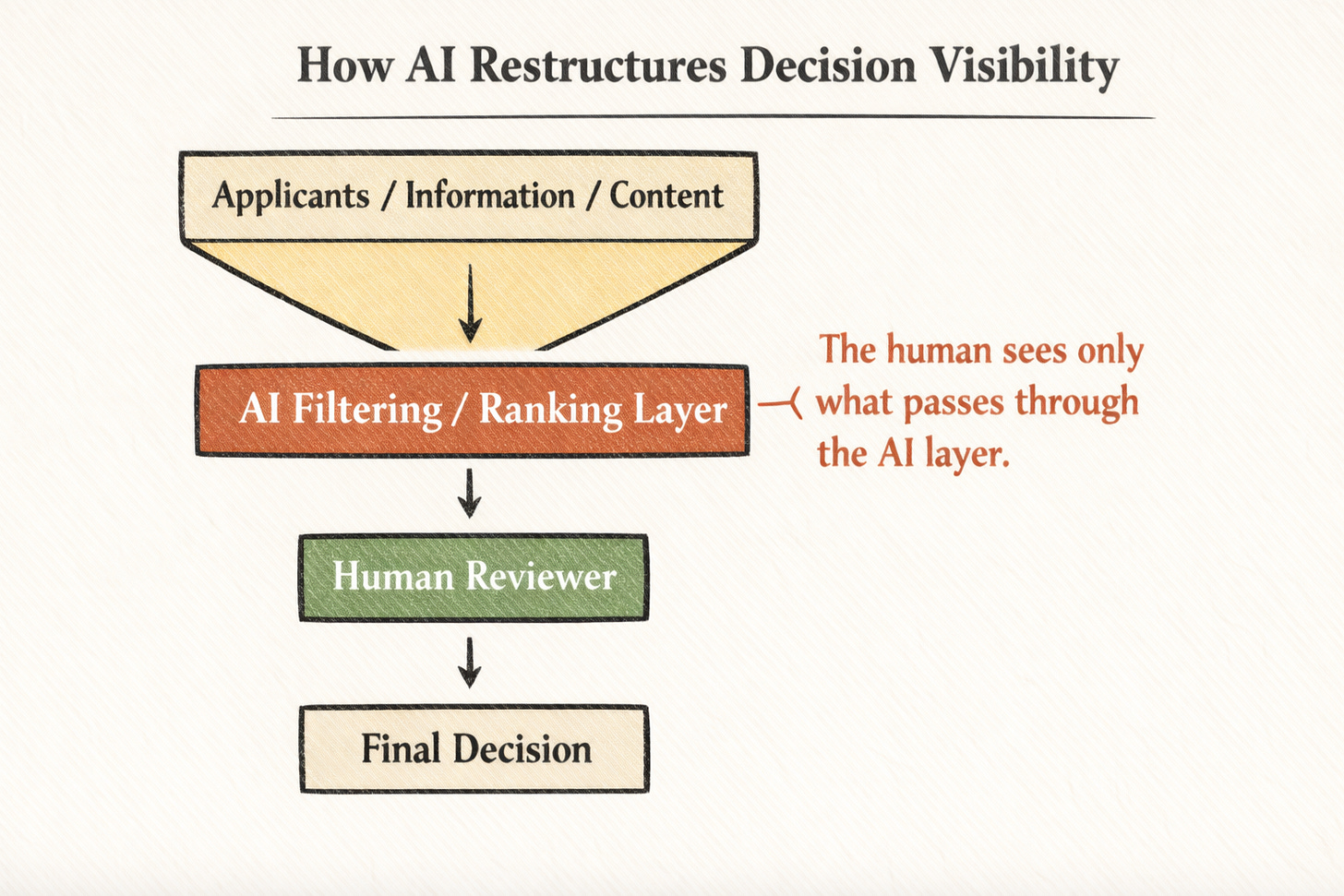

Consider hiring.

In many large organizations, AI systems now screen résumés before a human ever reviews them. These systems rank candidates, filter attributes, and predict “fit” based on historical patterns. In theory, a human remains in the loop. In practice, the human often sees only what the system chooses to surface.

At that point, AI is no longer assisting judgment — it is structuring it.

The tool does not merely accelerate a decision; it defines the field of visibility within which decisions are possible.

This is the subtle but critical shift from instrument to gatekeeper.

In that moment, artificial intelligence is no longer a productivity tool — it is institutional architecture.

Historical Precedents: When Tools Became Environments

To understand where AI might be heading, we should look backward.

1. The Industrial Machine

The steam engine was a tool. Factories were not.

When machinery reorganized labor into centralized industrial production, it reshaped human rhythms around shifts and time clocks. Workers adapted themselves to machines rather than machines adapting to workers.

Technology crossed a threshold: from assistance to structuring force.

2. The Algorithmic Feed

Search engines began as navigational aids. But as companies like Google refined ranking systems, search results began shaping what knowledge was visible and credible.

Similarly, recommendation algorithms on platforms like YouTube didn’t just reflect preferences — they reinforced and amplified them.

The tool began shaping desire.

When a system influences what you think, buy, believe, or vote for — at scale — the line between tool and environment dissolves.

The Autonomy Question

One reason AI feels different is the perception of agency.

Unlike a hammer or even a search engine, AI systems generate novel outputs. They simulate reasoning. They respond conversationally. They adapt.

But autonomy in AI is not consciousness — it is delegated decision space.

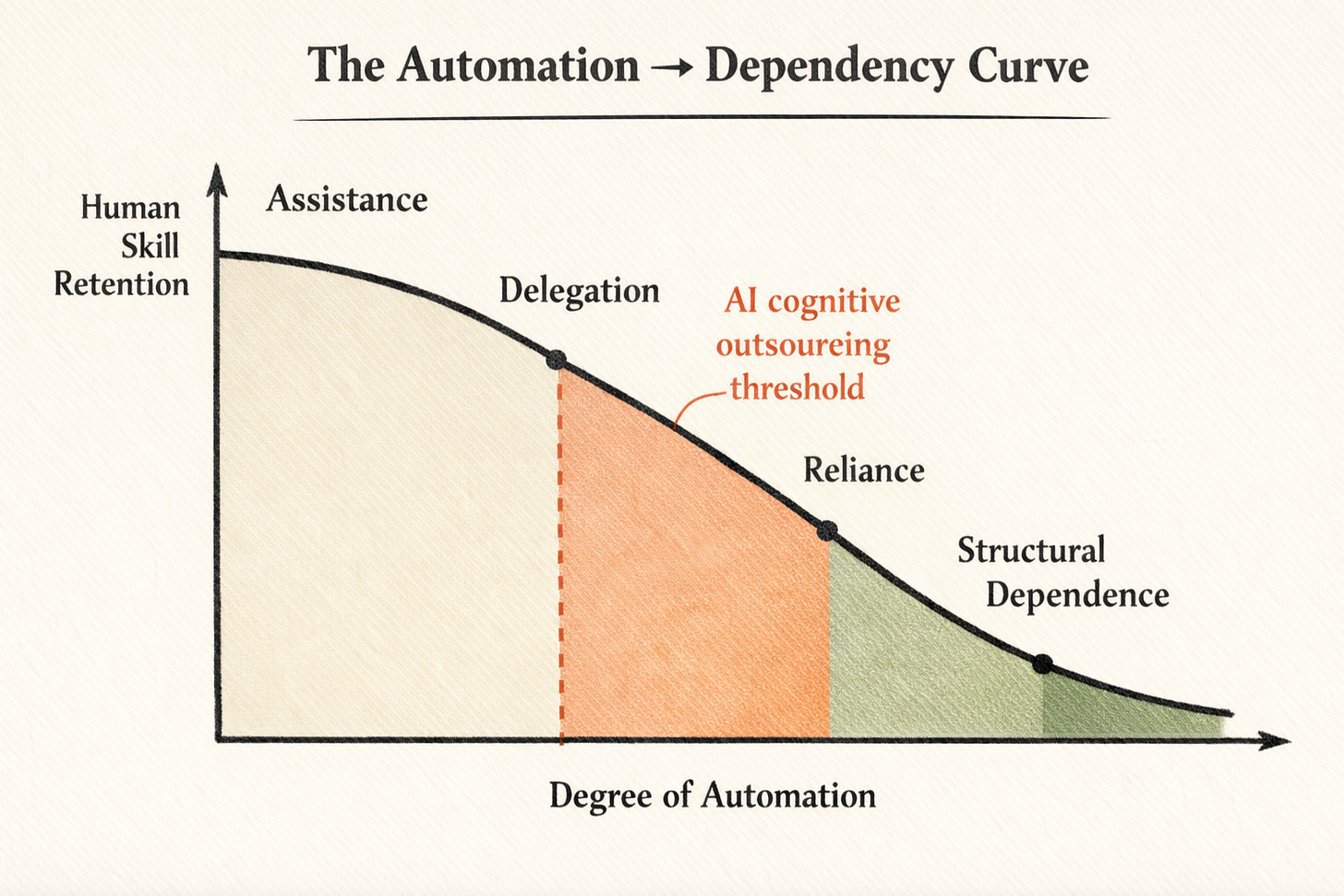

The more tasks we hand over — drafting emails, writing code, diagnosing disease, triaging legal documents — the more we outsource cognitive labor.

This creates a subtle shift:

We move from using tools

to collaborating with systems

to depending on them

to being shaped by them.

Dependency is the hinge.

When human skill atrophies because automation fills the gap, technology stops being optional. It becomes necessary. And necessity is the birthplace of structural power.

Economic Gravity

AI also alters economic gravity.

When companies deploy AI to optimize logistics, marketing, hiring, or customer service, they gain structural advantages. Early adopters scale faster. Efficiency compounds.

If participation in the economy requires AI integration, opting out becomes costly.

The printing press democratized knowledge.

Industrial machinery concentrated capital.

AI may do both simultaneously — expanding individual capability while centralizing infrastructural power in a handful of firms.

That concentration shifts the question from “How do I use this tool?” to “Who controls the system I must use?” to explain why AI features don’t compound.

That’s no longer a matter of utility. It’s a matter of governance.

The Psychological Shift

The most profound transformation may not be economic or political. It may be cognitive.

Every major technology has reshaped human capacity. The compass altered navigation. The printing press altered memory. GPS altered spatial awareness. Studies have shown that reliance on GPS measurably reduces hippocampal engagement — the brain region associated with spatial memory. When we outsource navigation, we do not merely save time; we reconfigure skill.

Artificial intelligence represents the first large-scale outsourcing of structured thought.

We are witnessing the rise of AI agents and more importantly, cognitive infrastructure — persistent, adaptive systems that scaffold reasoning itself. They summarize before we read, suggest before we decide, and filter before we perceive.

This is not merely automation of action. It is the architectural redesign of cognition.

We are not just automating labor. We are automating cognition.

When AI drafts first and we edit second, the architecture of reasoning subtly shifts. When summaries precede reading, synthesis precedes comprehension. When recommendation engines anticipate preference, curiosity narrows toward optimization.

This is not dystopia. It is adaptation.

But adaptation has direction.

If writing is thinking made visible, what happens when thinking becomes increasingly mediated? If problem-solving begins with machine-generated structure, do we begin to internalize that structure as default?

Technology stops being a tool when it begins shaping the cognitive environment in which thought itself occurs.

So When Does Technology Stop Being a Tool?

Technology stops being a tool when it structures the conditions under which choice occurs.

It stops being a tool when opting out becomes economically irrational.

It stops being a tool when it mediates judgment at scale.

It stops being a tool when human skill reorganizes itself around its presence.

By these standards, artificial intelligence is not approaching that threshold. In certain domains — hiring, logistics, content moderation, financial modeling — it has already crossed it.

The language of “tool” persists because it comforts us. But the functional reality is infrastructural.

There is a comforting narrative that “human-in-the-loop” systems preserve control.

But control is not binary. It is gradient.

When dependency sets in — when systems become too complex, too fast, or too embedded to operate without — oversight becomes ceremonial. The human signs off on outputs they did not generate, often under constraints they did not design.

At that point, the tool no longer asks for permission. It sets the tempo with the agentic operating model.

We begin to confuse usability with sovereignty.

And that confusion may be the defining governance challenge of artificial intelligence.

Of course, one could argue that every transformative technology has triggered similar anxieties. Humans adapt. Skills evolve. What feels like dependency may simply be transition.

But artificial intelligence differs in one critical way: it operates not only in the physical world, but in the cognitive layer itself. It does not merely change what we do. It changes how we decide.

That is a deeper shift.

A Path Forward: Designing for Agency

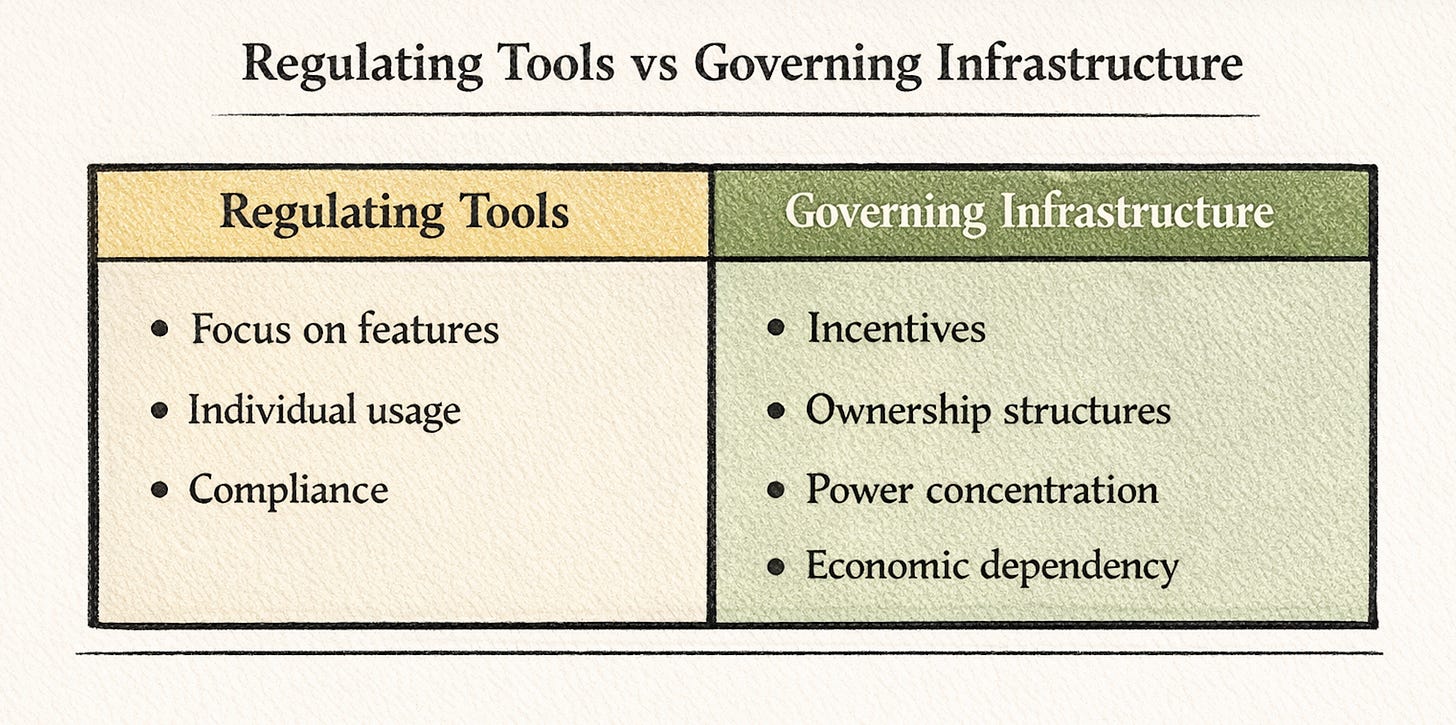

If artificial intelligence is becoming infrastructure, then responsibility shifts from individual usage to systemic design.

We cannot regulate it like a hammer.

We must govern it like infrastructure.

This includes:

1. Transparency

Understanding training data, model limitations, and deployment contexts.

2. Human-in-the-Loop Systems

Preserving meaningful oversight in domains like medicine, law, and public policy.

3. Cognitive Preservation

Encouraging educational systems that develop foundational skills rather than outsourcing them prematurely.

4. Distributed Ownership

Avoiding extreme centralization of AI infrastructure build-out that consolidates disproportionate influence.

5. Cultural Literacy

Teaching not just how to use AI, but how AI shapes incentives and perception.

The goal is not to prevent AI from evolving beyond tool status. That may be inevitable.

The goal is to ensure that as it becomes environment, it remains aligned with human flourishing rather than displacing it.

The Final Answer

The question is no longer whether artificial intelligence is a tool.

The question is whether we recognize the moment it becomes environment.

Environments shape behavior silently. They reward certain actions and discourage others. They recalibrate norms without announcement.

If AI becomes the cognitive, economic, and informational substrate through which decisions flow, then governance must move beyond regulating features and toward shaping incentives and power structures for a new agentic AI and economic restructuring.

This is not a call to halt innovation. It is a call to understand scale.

The moment our creations stop asking for permission is not the moment they become conscious. It is the moment they become cognitive infrastructure.

It is the moment intelligence shifts from tool to terrain.

And when intelligence becomes terrain, governance must evolve from usage oversight to architectural responsibility.

Artificial intelligence is not replacing humanity. It is redesigning the terrain upon which humanity operates.

The risk is not sudden domination. It is gradual normalization.

We will not wake up one morning controlled by machines. We will wake up one morning unable to imagine functioning without them.

Dependence rarely announces itself. It feels like convenience.

And that difference matters.

📚 References

Maslej, N., et al. (2025). Artificial Intelligence Index Report 2025. Stanford Institute for Human-Centered AI (HAI).

National Institute of Standards and Technology (NIST). (2024). Artificial Intelligence Risk Management Framework: Generative AI Profile (NIST AI 600-1).

OECD. (2024). Governing with Artificial Intelligence: Are governments ready? OECD Artificial Intelligence Papers, No. 20.

European Union. (2024). Artificial Intelligence Act (published in the Official Journal of the EU, 12 July 2024).

Ulnicane, I. (2025). Governance fix? Power and politics in controversies about governing generative AI. Policy and Society, 44(1), 70–84.

Solaiman, I., et al. (2023). Evaluating the Social Impact of Generative AI Systems in Systems and Society. arXiv:2306.05949.

G7 Hiroshima Process. (2023). International Code of Conduct for Organizations Developing Advanced AI Systems.

The White House. (2023). Executive Order 14110: Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence (Oct 30, 2023; published in the Federal Register Nov 1, 2023).

Jung, C. (2025). The new politics of AI: Why fast technological change requires bold policy targets. Institute for Public Policy Research (IPPR).

👉 Unlock the Strategy Stack

…and access the Business Model Series, advanced Cheat Sheets, the S-Vault, and various essays at the intersection of strategy and technology.

Hit subscribe to get it in your inbox. And if this spoke to you:

➡️ Forward this to a strategy peer who’s feeling the same shift. We’re building a smarter, tech-equipped strategy community—one layer at a time.

Let’s stack it up.

A. Pawlowski | The Strategy Stack

When a tool fails, it is a bug. When an agent fails, it is a leadership crisis. We are being told to step back and let AI act on our behalf, but as a Senior Engineering Manager, I know that you can delegate the task, but you can never delegate the responsibility.

If we lose the "craft" of doing the work, we lose the intuition needed to catch the agent when it drifts. We risk becoming managers of black boxes.

Leadership isn't just about orchestrating the most efficient system. It is about being the person who can explain why a decision was made when the system fails. AI agents can give us speed, but they can't give us a conscience.